Here is a small post on a very specific use case of Airflow. While its not very difficult to do this, but I found really no straight documentation around this.

Use case

A hive/spark query that runs daily in Airflow. How can we make this query also run over a range of dates such that we don’t do the daily trigger 30 times for all the days in month.

This is particularly helpful in our case where we can use the same script for historic builds over past years, or for batch correction jobs that can recompute the same data over a month.

Note, We already have airflow-backfill that has the range run capability by specifying start and end dates, but it internally just triggers the dag for every single date. I was looking for a single trigger that can run over the entire day, just by modifying the query that runs over the data. [Airflow cli docs].

Approach

Airflow configs to the rescue. Airflow allows us to trigger job on the command line with custom configs. These configs can be used to populate our query with date ranges selectively.

So how we achieve it:

- The daily builds always run for single day (typically today or yesterday)

- Range run date.

- The range run date (which will not be very frequent) can run manually from command line trigger with custom configs.

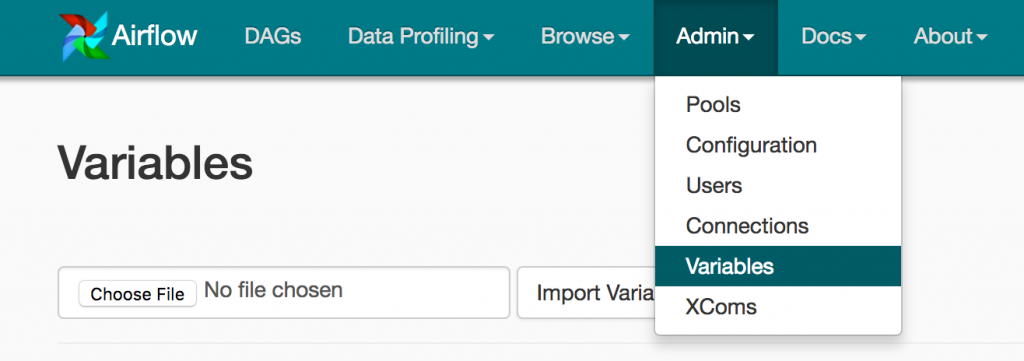

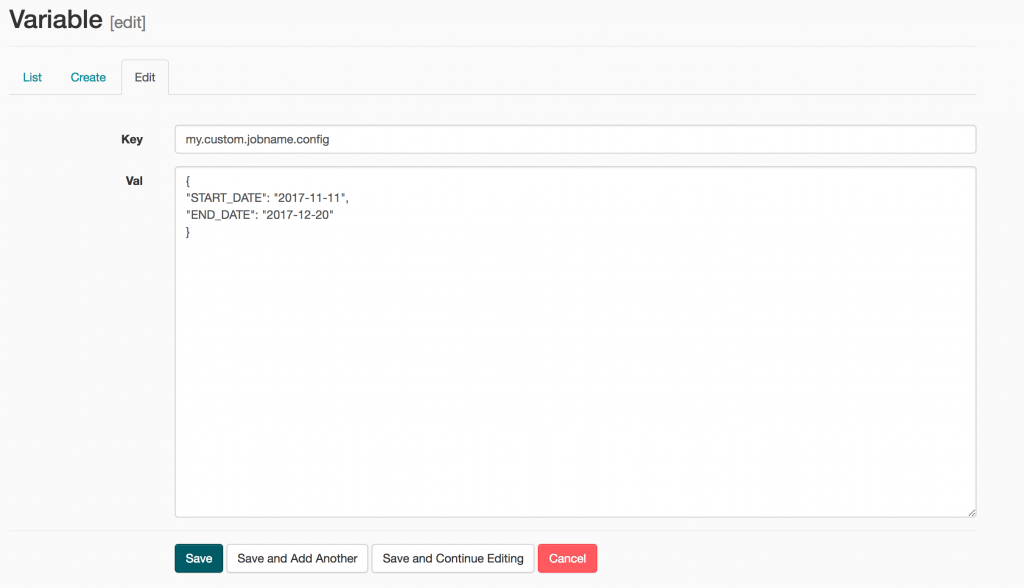

- Alternatively, The dates for range run can be set in Airflow Variables via the web ui, and the next run should pick them up. (Recommended. Less manual)

Note, its very important to understand that the trigger still considers this as a normal daily run, and the web interface for job monitoring will not have any information on if we had a month worth run or a daily run. The airflow web interface currently doesn’t show the configs passed to the job.

Also, the month worth run will take longer time to run, so we change the config for the dag to have only a single instance running at a time (max_active_runs=1).

How it was done

Airflow Dag:

This defines our Dag that populates the start and end date range for run based on the config passed. Falls back to daily dates if no configs are passed. The dag_run.conf provides us all our useful configurations in job.

Trigger from command line:

airflow trigger_dag <DAG_NAME> [-c config_json]

airflow trigger_dag interesting_data

airflow trigger_dag -c '{"_MANUAL_OVERRIDE_START_DATE": "2017-06-01", "_MANUAL_OVERRIDE_END_DATE": "2017-07-01"}' interesting_data

Note, the daily runs don’t have to be triggered via command line, the Airflow’s scheduler should take care of that for us. Its just the manual run that we would need to trigger via command line as per our requirements.

Fetch the start and end dates from Airflow variables:

If you are not willing to trigger from the command line, the START_DATE and END_DATE can also be fetched from Airflow Variables which can be set in the web ui and the next run would pick them up. Note: You would need to delete the variables once one run has picked them up, else every run would keep running over the new date range provided.

Setting the Variable in Airflow via the web ui. This will overwrite our run with new dates.

Hope this small post will be helpful for similar use cases.